What if one took up the study of computation not just as a form of reason, but as a form of rhetoric? That might be one of the key questiobns animating media studies today. But it is not enough to simply describe the surface effects of computational media as in some sense creating or reproducing rhetorical forms. One would need to understand something of the genesis and forms of software and hardware themselves. Then one might have something to say not just about software as rhetoric, but software as ideology, computation as culture — as reproducing or even producing some limited and limiting frame for acting in and on he world.

Is the relation between the analog and the digital itself analog or digital? That might be one way of thinking the relation between the work of Alexander Galloway and Wendy Hui Kyong Chun. I wrote elsewhere about Galloway’s notion of software as a simulation of ideology. Here I take up Chun’s of software as an analogy for ideology, through a reading of her book Programmed Visions: Software and Memory (MIT Press, 2011).

Software as analogy is a strange thing. It illustrates an unknown through an unknowable. It participates in, and embodies, some strange properties of information. Chun: “digital information has divorced tangibility from permanence.” (5) Or as I put it in A Hacker Manifesto, the relation between matter as support for information becomes arbitrary. Chun: “Software as thing has led to all ‘information’ as thing.” (6)

The history of the reification of information passes through the history of the production of software as a separate object of a distinct labor process and form of property. Chun puts this in more Foucauldian terms: “the remarkable process by which software was transformed from a service in time to a product, the hardening of relations into a thing, the externalization of information from the self, coincides with and embodies larger changes within what Michel Foucault has called governmentality.” (6)

Software coincides with a particular mode of governmentality, which Chun follows Foucault in calling neoliberal. I’m not entirely sure ‘neoliberal’ holds much water as a concept, but the general distinction would be that in liberalism, the state has to be kept out of the market, whereas in neoliberalism, the market becomes the model for the state. In both, there’s no sovereign power governing from above so much as a governmentality that produces self-activating subjects who’s ‘free’ actions can’t be known in advance. Producing such free agents requires a management of populations, a practice of biopower.

Such might be a simplified explanation of the standard model. What Chun adds is the role of computing in the management of populations and the cultivation of individuals as ‘human capital.’ The neoliberal subject feels mastery and ‘empowerment’ via interfaces to computing which inform the user about past events and possible futures, becoming, in effect, the future itself.

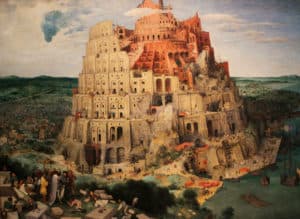

The ‘source’ of this mode of governmentality is source code itself. Code becomes logos: in the beginning was the code. Code becomes fetish. On some level the user knows code does not work magically on its own but merely controls a machine, but the user acts as if code had such a power. Neither the work of the machine nor the labor of humans figures much at all.

Code as logos organizes the past as stored data and presents it via an interface as the means for units of human capital to place their bets. “Software as thing is inseparable from the externalization of memory, from the dream and nightmare of an all encompassing archive that constantly regenerates and degenerates, that beckons us forward and disappears before our very eyes.” (11) As Berardi and others have noted, this is not the tragedy of alienation so much as what Baudrillard called the ecstasy of communication.

Software is a crucial component in producing the appearance of transparency, where the user can manage her or his own data and imagine they have ‘topsight’ over all the variables relevant to their investment decisions about their own human capital. Oddly, this visibility is produced by something invisible, that hides its workings. Hence computing becomes a metaphor for everything we believe is invisible yet generates visible effects. The economy, nature, the cosmos, love, are all figured as black boxes that can be known by the data visible on their interfaces.

The interface appears as a device of some kind of ‘cognitive mapping’, although not the kind Fredric Jameson had in mind, which would be an aesthetic intuition of the totality of capitalist social relations. What we get, rather is a map of a map, of exchange relations among quantifiable units. On the screen of the device, whose workings we don’t know, we see clearly the data about the workings of other things we don’t know. Just as the device seems reducible to the code that makes the data appear, so too must the other systems it models be reducible to the code that makes their data appear.

But this is not so much an attribute of computing in general as a certain historical version of it, where software emerged as a second (and third, and fourth…) order way of presenting the kinds of things the computer could do. Chun: “Software emerged as a thing – as an iterable textual program – through a process of commercialization and commodification that has made code logos: code as source, code as true representation of action, indeed code as conflated with, and substituting for, action.” (18)

One side effect of the rise of software was the fantasy of the all-powerful programmer. I don’t think it is entirely the case that the coder is an ideal neoliberal subject, and not least because of the ambiguity as to whether the coder makes the rules or simply has to follow them. That creation involves rule-breaking is a romantic idea of the aesthetic, not the whole of it.

The very peculiar qualities of information, in part a product of this very technical-scientific trajectory, makes the coder a primary form of an equally peculiar kind of labor. But labor is curiously absent from parts of Chun’s thinking. The figure of the coder as hacker may indeed be largely myth, but it is one that poses questions of agency that don’t typically appear when one thinks through Foucault.

Contra Galloway, Chun does not want to take as given the technical identity of software as means of control with the machine it controls. She wants to keep the materiality of the machine in view at all times. Code isn’t everything, even if that is how code itself gets us to think. “This amplification of the power of source code also dominates critical analyses of code, and the valorization of software as a ‘driving layer’ conceptually constructs software as neatly layered.” (21)

And hence code becomes fetish, as Donna Haraway has also argued. However, this is a strange kind of fetish, not entirely analogous to the religious, commodity, or sexual fetish. Where those fetishes supposedly offer imaginary means of control, code really does control things. One could even reverse the claim here. What if not accepting that code has control was the mark of a fetishism? One where particular objects have to be interposed as talismans of a power relationship that is abstract and invisible?

I think one could sustain this view and still accept much of the nuance of Chun’s very interesting and persuasive readings of key moments and texts in the history of computing. She argues, for instance, that code ought not to be conflated with its execution. One cannot run ‘source’ code itself. It has to be compiled. The relation between source code and machine code is not a mere technical identity. “Source code only becomes a source after the fact.” (24)

Mind you, one could push this even further than Chun does. She grounds source code in machine code and machine code in machine architectures. But these in turn only run if there is an energy ‘source’, and can only exist if manufactured out of often quite rare materials – as Jussi Parikka shows in his Geology of Media. All of which, in this day and age, are subject to forms of computerized command to bring such materials and their labors together. To reduce computers to command, and indeed not just computers but whole political economies, might not be so much an anthropomorphizing of the computer as a recognition that information has become a rather nonhuman thing.

I would argue that perhaps the desire to see the act of commanding an unknown, invisible device through interface, through software, in which code appears as source and logos is at once a way to make sense of neoliberal political-economic opacity and indeed irrationality. But perhaps command itself is not quite so commanding, and only appears as a gesture that restores the subject to itself. Maybe command is not ‘empowering’ of anything but itself. Information has control over both objects and subjects.

Here Chun usefully recalls a moment from the history of computing – the “ENIAC girls.” (29) This key moment in the history of computing had a gendered division of labor, where men worked out the mathematical problem and women had to embody the problem in a series of steps performed by the machine. “One could say that programming became programming and software became software when the command structure shifted from commanding a ‘girl’ to commanding a machine.” (29)

Although Chun does not quite frame it as such, one could see the postwar career of software as the result of struggles over labor. Software removes the need to program every task directly in machine language. Software offers the coder an environment in which to write instructions for the machine, or the user to write problems for the machine to solve. Software appears via an interface that makes the machine invisible but offers instead ways to think about the instructions or the problem in a way more intelligible to the human and more efficient in terms of human abilities and time constraints.

Software obviates the need to write in machine language, which made programming a higher order task, based on mathematical and logical operations rather than machine operations. But it also made programming available as a kind of industrialized labor. Certain tasks could be automated. The routine running of the machine could be separated from the machine’s solution of particular tasks. One could even see it as in part a kind of ‘deskilling.’

The separation of software from hardware also enables the separation of certain programming tasks in software from each other. Hence the rise of structured programming as a way of managing quality and labor discipline when programming becomes an industry. Structured programming enables a division of labor and secures the running of the machine from routine programming tasks. The result might be less efficient from the point of view of organizing machine ‘labor’ but more efficient from the point of view of organizing human labor. Software recruits the machine into the task of managing itself. Structured programming is a step towards object oriented programming, which further hides the machine, and also the interior of other ‘objects’ from the ones with which the programmer is tasked within the division of labor.

As Chun notes, it was Charles Babbage more than Marx who foresaw the industrialization of cognitive tasks and the application of the division of labor to them. Neither foresaw software as a distinct commodity; or (I would add) one that might be the product of a quite distinct kind of labor. More could be said here about the evolution of the private property relation that will enable software to become a thing made by labor rather than a service that merely applies naturally-occurring mathematical relations to the running of machines.

Crucial to Chun’s analysis is the way source code becomes a thing that erases execution from view. It hides the labor of the machine, which becomes something like one of Derrida’s specters. It makes the actions of the human at the machine appear as a powerful relation. “Embedded within the notion of instruction as source and the drive to automate computing – relentlessly haunting them – is a constantly repeated narrative of liberation and empowerment, wizards and (ex)slaves.” (41)

I wonder if this might be a general quality of labor processes, however. A car mechanic does not need to know the complexities of the metallurgy involved in making a modern engine block. She or he just needs to know how to replace the blown gasket. What might be more distinctive is the way that these particular ‘objects’, made of information stored on some random material surface or other, can also be forms of private property, and can be designed in such a way as to render the information in which they traffic also private property. There might be more distinctive features in how the code-form interacts with the property-form than in the code-form alone.

If one viewed the evolution of those forms together as the product of a series of struggles, one might then have a way of explaining the particular contours of today’s devices. Chun: “The history of computing is littered with moments of ‘computer liberation’ that are also moments of greater obfuscation.” (45) This all turns on the question of who is freed from what. But in Chun such things are more the effects of a structure than the result of a struggle or negotiation.

Step by step, the user is freed from not only having to know about her or his machine, but then also from ownership of what runs on the machine, and then from ownership of the data she or he produces on the machine. There’s a question of whether the first kind of ‘liberation’ – from having to know the machine – necessarily leads to the other all on its own, or rather in combination with the conflicts that drove the emergence of a software-driven mode of production and its intellectual property form.

In short: Programmers appeared to become more powerful but more remote from their machines; users appeared to become more powerful but more remote from their machines. The programmer and then the user work not with the materiality of the machine but with its information. Information becomes a thing, perhaps in the sense of a fetish, but perhaps also in the senses of a form of property and an actual power.

But let’s not lose sight of the gendered thread to the argument. Programming is an odd profession, in that at a time when women were making inroads into once male-dominated professions, programing went the other way, becoming more a male domain. Perhaps it is because it started out as a kind of feminine clerical labor but became – through the intermediary of software – a priestly caste, an engineering and academic profession. Perhaps its male bias is in part an artifact of timing: programming becomes a profession rather late. I would compare it then to the well-known story of how obstetrics pushed the midwives out of the birth-business, masculinizing and professionalizing it, now over a hundred years ago, but then more recently challenged as a male-dominated profession by the re-entry of women as professionals.

My argument would be that while the timing is different, programming might not be all the different from other professions in its claims to mastery and exclusive knowledge based on knowledge of protocols shorn of certain material and practical dimensions. In this regard, is it all that different from architecture?

What might need explaining is rather how software intervened in, and transforms, all the professions. Most all of them have been redefined as kinds of information-work. In many cases this can lead to deskilling and casualization, on the one hand, and to the circling of the wagons around certain higher-order, but information based, functions on the other. As such, it is not that programming is an example of ‘neoliberalism’, so much as that neoliberalism has become a catch-all term for a collection of symptoms of the role of computing in its current form in the production of information as a control layer.

Hence my problem is with the ambiguity in formulations such as this: “Software becomes axiomatic. As a first principle, it fastens in place a certain neoliberal logic of cause and effect, based on the erasure of execution and the privileging of programming…” (49) What if it is not that software enables neoliberalism, but rather than neoliberalism is just a rather inaccurate way of describing a software-centric mode of production?

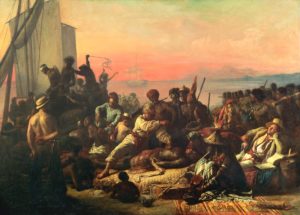

The invisible machine joins the list of other invisible operators: slaves, women, workers. They don’t need to be all that visible so long as they do what they’re told. They need only to be seen to do what they are supposed to do. Invisibility is the other side of power. To the extent that software has power or is power it isn’t an imaginary fetish.

Rather than fetish and ideology, perhaps we could use some different concepts, what Bogdanov calls substitution and the basic metaphor. In this way of thinking, actual organizational forms through which labor are controlled get projected onto other, unknown phenomena. We substitute the form of organization we know and experience for forms we don’t know – life, the universe, etc. The basic metaphors in operation are thus likely to be those of the dominant form of labor organization, and its causal model will become a whole worldview.

That seems to me a good basic sketch for how code, software and information became terms that could be substituted into any and every problem, from understanding the brain, or love, or nature or evolution. But where Chun wants to stress what this hides from view, perhaps we could also look at the other side, at what it enables.

Chun: “This erasure of execution through source code as source creates an intentional authorial subject: the computer, the program, or the user, and this source is treated as the source of meaning.” (53) Perhaps. Or perhaps it creates a way of thinking about relations of power, even of mapping them, in a world in which both objects and subjects can be controlled by information.

As Chun acknowledges, computers have become metaphor machines. As universal machines in Turing’s mathematical sense, they become universal machines also in a poetic sense. Which might be a way of explaining why Galloway thinks computers are allegorical. I think for him allegory is mostly spatial, the mapping of one terrain onto another. I think of allegory as temporal, as the paralleling of one block of time with another, indeed as something like Bogdanov’s basic metaphor, where were one cause-effect sequence is used to explain another one.

The computer is in Chun’s terms a sort of analogy, or in Galloway’s a simulation. This is the sense in which for Chun the relation between analog and digital is analog, while for Galloway it is digital. Seen from the machine side, one sees code as an analogy for the world it controls; seen from the software side, one sees a digital simulation of the world to be controlled. Woven together with Marx’s circuit of money -> commodity -> money there is now another: digital -> analog -> digital. The question of the times might be how the former got subsumed into the latter.

For Chun, the promise of what the ‘intelligence community’ calls ‘topsight’ through computation proves illusory. The production of cognitive maps via computation obscures the means via which they are made. But is there not a kind of modernist aesthetic at work here, where the truth of appearances is in revealing the materials via which appearances are made? I want to read her readings in the literature of computing a bit differently. I don’t think it’s a matter of the truth of code lying in its execution by and in and as the machine. If so, why stop there? Why not further relate the machine to its manufacture? I am also not entirely sure one can say, after the fact, that software encodes a neoliberal logic. Rather, one might read for signs of struggles over what kind of power information could become.

This brings us to the history of interfaces. Chun starts with the legendary SAGE air defense network, the largest computer system ever built. It used 60,000 vacuum tubes and took 3 megawatts to run. It was finished in 1963 and already obsolete, although it led to the SABRE airline reservation system. Bits of old SAGE hardware were used in film sets whether blinky computers were called for – as in Logan’s Run.

SAGE is an origin story for ideas of real time computing and interface design, allowing ‘direct’ manipulation that simulates engagement by the user. It is also an example of what Brenda Laurel would later think in terms of Computers as Theater. Like a theater, computers offer what Paul Edwards calls a Closed World of interaction, where one has to suspend disbelief and enter into the pleasures of a predictable world.

The choices offered by an interface make change routine. The choices offered by interface shape notion of what is possible. We know that our folders and desktops are not real but we use them as if they were anyway. (Mind you, a paper file is already a metaphor. The world is no more well or less well represented by my piles of paper folders as it is by my ‘piles’ of digital ‘folders’, even if they are not quite the same kind of representation).

Chun: “Software and ideology fit each other perfectly because both try to map the tangible effects of the intangible and to posit the intangible causes through visible cues.” (71) Perhaps this is one response to the disorientation of the postmodern moment. Galloway would say rather that software simulates ideology. I think in my mind it’s a matter of software emerging as a basic metaphor, a handy model from the leading labor processes of the time substituted for processes unknown.

So the cognitive mapping Jameson called for is now something we all have to do all the time, and in a somewhat restricted form – mapping data about costs and benefits, risks and rewards – rather than grasping the totality of commodified social relations. There’s an ‘archaeology’ for these aspects of computing too, going back to Vannevar Bush’s legendary article ‘As we may think’, with its model of the memex, a mechanical machine for studying the archive, making associative indexing links and recording intuitive trails.

In perhaps the boldest intuition in the book, Chun thinks that this was part of a general disposition, an ‘episteme’, at work in modern thought, where the inscrutable body of a present phenomena could be understood as the visible product of an invisible process that was in some sense encoded. Such a process requires an archive, a past upon which to work, and a process via which future progress emerges out of past information.

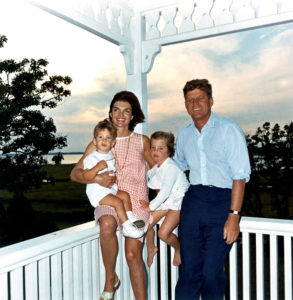

Computing meets and creates such a worldview. JCR Licklider, Douglas Engelbart and other figures in postwar computing wanted computers that were networked, ran in real time and had interfaces that allowed the user to ‘navigate’ complex problems while ‘driving’ an interface that could be learned step by step. Chun: “Engelbart’s system underscores the key neoliberal quality of personal empowerment – the individual’s ability to see, steer, and creatively destroy – as vital social development.” (83) To me it makes more sense to say that the symptoms shorthanded by the commonplace ‘neoliberal’ are better thought of as ‘Engelbartian.’ His famous ‘demo’ of interactive computing for ‘intellectual workers’ ought now to be thought of as the really significant cultural artifact of 1968.

Chun: “Software has become a common-sense shorthand for culture, and hardware shorthand for nature… In our so-called post-ideological society, software sustains and depoliticizes notions of ideology and ideology critique. People may deny ideology, but they don’t deny software – and they attribute to software, metaphorically, greater powers that have been attributed to ideology. Our interactions with software have disciplined us, created certain expectations about cause and effect, offered us pleasure and power – a way to navigate our neoliberal world – that we believe should be transferrable elsewhere. It has also fostered our belief in the world as neoliberal; as an economic game that follows certain rules.” (92) But does software really ‘depoliticize’, or does it change what politics is or could be?

Digital media both program the future and the past. The archive is first and last a public record of private property (which was of course why the Situationists practiced détournement, to treat it not as property but as a commons.) Political power requires control of the archive, or better, of memory – as Google surely have figured out. Chun: “This always there-ness of new media links it to the future as future simple. By saving the past, it is supposed to make knowing the future easier. The future is technology because technology enables us to see trends and hence to make projections – it allows us to intervene on the future based on stored programs and data that compress time and space.” (97)

Here is what Bogdanov would recognize as a basic metaphor for our times: “To repeat, software is axiomatic. As a first principle, it fastens in place a certain logic of cause and effect, a causal pleasure that erases execution and reduces programming to an act of writing.” (101) Mind, genes, culture, economy, even metaphor itself can be understood as software.

Software produces order from order, but as such it is part of a larger episteme: “the drive for software – for an independent program that conflates legislation and execution – did not arise solely from within the field of computation. Rather, code as logos existed elsewhere and emanated from elsewhere – it was part of a larger epistemic field of biopolitical programmability.” (103) As indeed Foucault’s own thought may be too.

In a particularly interesting development, Chun argues that both computing and modern biology derive from this same episteme. It is not that biology developed a fascination with genes as code under the influence of computing. Rather, both computing and genetics develop out of the same space of concepts.

Actually, early cybernetic theory had no concept of software. It isn’t in Norbert Weiner or Claude Shannon. Their work treated information as signal. In the former, the signal is feedback, and in the latter, the signal has to defeat noise. How then did information thought of as code and control develop both in cybernetics and also in biology? Both were part of the same governmental drive to understand the visible as controlled by an invisible program that derives present from past and mediates between populations and individuals.

A key text for Chun here is Erwin Schrödinger’s ‘What is Life?’ (1944), which posits the gene as a kind of ‘crystal’. He saw living cells as run by a kind of military or industrial governance, each cell following the same internalized order(s). This resonates with Shannon’s conception of information as negative-entropy (a measure of randomness) and Weiner’s of information as positive entropy (a measure of order).

Schrödinger’s text made possible a view of life that was not vitalist – no special spirit is invoked – but which could explain organization above the level of a protein, which was about the level of complexity that Needham and other biochemists could explain at the time. But it comes at the price of substituting ‘crystal’, or ‘form’ for the organism itself.

Drawing on early Foucault, Chun thinks some key elements of a certain episteme of knowledge are embodied in Schrödinger’s text. Foucault’s interest was in discontinuities. Hence his metaphor of ‘archeology,’ which gives us the image of discontinuous strata. It was never terribly clear in Foucault what accounts for ‘mutations’ that form the boundaries of these discontinuities. The whole image of ‘archaeology’ presents the work of the philosopher of knowledge as a sort of detached ‘fieldwork’ in the geological strata of the archive.

Chun: “The archeological project attempts to map what is visible and what is articulable.” (113) One has to ask whether Foucault’s work was perhaps more an exemplar than a critique of a certain mode of knowledge. Foucault said that Marx was a thinker who swam in the 19th century as a fish swims in water. Perhaps now we can say that Foucault is a thinker who swam in the 20th century as a fish swims in water. Computing, genetics and Foucault’s archaeology are about discontinuous and discrete knowledge.

Still, he has his uses. Chun puts Foucault to work to show how there is a precursor to the conceptual architecture of computing in genetics and eugenics. The latter was a political program, supposedly based on genetics, whose mission was improving the ‘breeding stock’ of the human animal. But humans proved very hard to program, so perhaps that drive ended up in computing instead.

The ‘source’ for modern genetics is usually acknowledged to be the rediscovered experiments of Gregor Mendel. Mendelian genetics is in a sense ‘digital’. The traits he studied are binary pairs. The appearance of the pea (phenotype) is controlled by a code (genotype). The recessive gene concept made eugenic selective breeding rather difficult as an idea. But it is a theory of a ‘hard’ inheritance, where nature is all and nurture does not matter. As such, it could still be used in debates about forms of biopower on the side of eugenic rather than welfare policies.

Interestingly, Chun makes the (mis)use of Mendelian genetics as a eugenic theory a precursor to cybernetics. “Eugenics is based on a fundamental belief in the knowability of the human body, an ability to ‘read’ its genes and to program humanity accordingly…. Like cybernetics, eugenics is means of ‘governing’ or navigating nature.” (122) The notion of information as a source code was already at work in genetics long before either computing or modern biology. Control of, and by, code as a means of fostering life, agency, communication and the qualities of freely acting human capital is then an idea with a long history. One might ask whether it might not correspond to certain tendencies in the organization of labor at the time.

What links machinic and biological information systems is the idea of some kind of archive of information out of which a source code articulates future states of a system. But memory came to be conflated with storage. The active process of both forgetting and remembering turns into a vast and endless storage of data.

Programmed Visions is a fascinating and illuminating read. I think where I would want to diverge from it is at two points. One has to do with the ontological status of information, and the second has to do with its political-economic status. In Chun I find that information is already reduced to the machines that execute its functions, and then those machines are inserted into a historical frame that sees only governmentality and not a political economy.

Chun: “The information travelling through computers is not 1s and 0s; beneath binary digits and logic lies a messy, noisy world of signals and interference. Information – if it exists – is always embodied, whether in a machine or an animal.” (139) Yes, information has no autonomous and prior existence. In that sense neither Chun or Galloway or I are Platonists. But I don’t think information is reducible to the material substrate that carries it.

Information is a slippery term, meaning both order, neg-entropy, form, on the one hand, and something like signal or communication on the other. These are related aspects of the same (very strange) phenomena, but not the same. The way I would reconstruct technical-intellectual history would but the stress on the dual production of information both as a concept and as a fact in the design of machines that could be controlled by it, but where information is meant as signal, and as signal becomes the means of producing order and form.

One could then think about how information was historically produced as a reality, in much the same way that energy was produced as a reality in an earlier moment in the history of technics. In both cases certain features of natural history are discovered and repeated within technical history. Or rather, features of what will retrospectively become natural history. For us there was always information, just as for the Victorians there was always energy (but no such thing as information). The nonhuman enters human history through the inhuman mediation of a technics that deploys it.

So while I take the point of refusing to let information float free and become a kind of new theological essence or given, wafting about in the ‘cloud’, I think there is a certain historical truth to the production of a world where information can have arbitrary and reversible relations to materiality. Particularly when that rather unprecedented relation between information and its substrate is a control relation. Information controls other aspects of materiality, and also controls energy, the third category here that could do with a little more thought. Of the three aspects of materiality: matter, energy and information, the latter now appears as a form of controlling the other two.

Here I think it worth pausing to consider information not just as governmentality but also as commodity. Chun: “If a commodity is, as Marx famously argued, a ‘sensible supersensible thing’, information would seem to be its complement: a supersensible sensible thing…. That is, if information is a commodity, it is not simply due to historical circumstances or to structural changes, it is also because commodities, like information, depend on a ghostly abstract.” (135) As retrospective readers of how natural history enters social history, perhaps we need to re-read Marx from the point of view of information. He had a fairly good grasp of thermodynamics, as Amy Wendling observes, but information as we know it today did not yet exist.

To what extent is information the missing ‘complement’ to the commodity? There is only one kind of (proto)information in Marx, and that is the general equivalent – money. The materiality of a thing – let’s say ‘coats’ – its use value, is doubled by its informational quantity, its exchange value, and it is exchanged against the general equivalent, or information as quantity.

But notice the missing step. Before one can exchange the thing ‘coats’ for money, one needs the information ‘coats’. What the general equivalent meets in the market is not the thing but another kind of information – let’s call it the general non-equivalent – a general, shared, agreed kind of information about the qualities of things.

Putting these sketches together, one might then ask what role computing plays in the rise of a political economy (or a post-political one), in which not only is exchange value dominant over use value, but where use value further recedes behind the general non-equivalent, or information about use value. In such a world, fetishism would be mistaking the body for the information, not the other way around, for it is the information that controls the body.

Thus we want to think bodies matter, lives matter, things matter – when actually they are just props for the accumulation of information and information as accumulation. ‘Neo’liberal is perhaps too retro a term for a world which does not just set bodies ‘free’ to accumulate property, but sets information free from bodies, and makes information property in itself. There is no bipower, as information is not there to make better bodies, but bodies are just there to make information.

Unlike Kittler, Chun is aware of the difficulties of a fully reductive approach to media. For her it is more a matter of keeping in mind both the invisible and visible, the code and what executes it. “The point is not to break free of this sourcery but rather to… make our computers more productively spectral by exploiting the unexpected possibilities of source code as fetish.” (20)

I think there may by more than one kind of non-visible in the mix here, though. So while there’s reasons to anchor information in the bodies that display it, there’s also reasons to think the relation of different kinds of information to each other. Perhaps bodies are shaped now by more than one kind of code. Perhaps it is no longer a time in which to use Foucault and Derrida to explain computing, but rather to see them as side effects of the era of computing itself.

Wendy Chun’s insights on software and the machine are fascinating! Her perspective highlights the intricate relationship between technology and its impact on society. As we embrace these advancements, implementing IT asset management software becomes crucial for businesses. This software helps organizations efficiently track and manage their IT resources, ensuring they get the most out of their technology investments. By understanding and optimizing our tools, we can navigate the complexities of the digital landscape more effectively.

Wendy Chun’s insights on the intersection of software and the machine are thought-provoking! Her perspective highlights how deeply intertwined technology and human experience are in our digital age. As we navigate these complexities, utilizing innovation management software can be incredibly beneficial. It helps organizations adapt to emerging trends and foster a culture of creativity, ensuring they stay ahead in a rapidly changing landscape. By embracing innovative approaches, we can harness the full potential of technology while addressing the societal implications that come with it. Excited to see how these discussions shape the future of software development!

Such a powerful reflection — Chun’s perspective made me rethink how much we naturalize software behavior without questioning its framing. Code isn’t neutral, and neither are the interfaces or outputs we depend on daily. That tension between abstraction and control hits close to home when working on strategy, especially in digital spaces where perception shapes action. If you’re building anything online — a brand, a tool, a system — understanding these dynamics is just as important as mastering tactics. Learn more here if you’re exploring how deeper thinking about technology can sharpen your creative direction, not just your technical execution.